@graham-xrf , half decent crystal oscillators are available for not too much cash. We used them in automotive radars, so they had to be very inexpensive. We used 16 bit converters. Although ADC's are sensitive to clock noise, quite often, it is usually not the clock which is the dominant noise contributor.

Often it was a poor front end, or even sloppy signal processing that lead to the classic, "we are 10 dB worse than we ought to be". (I've seen far worse on some systems.) Obviously, not saying something you don't know, but thought I'd point it out to others (if anyone is still following). I've had many occasions to install extremely low phase noise oscillators into systems and saw no difference. It should have helped, in theory, but the dominant noise contributors were far, far greater and swamped the contribution of the oscillator, so there was no detectable difference, even in a statistical sense over > 10,000 trials. In one case, it was a bitter pill for the software team to swallow, since in fact it was their algorithms that were degrading the SNR, not the oscillator. Once those algorithms were identified, they were changed and we achieved predicted performance.

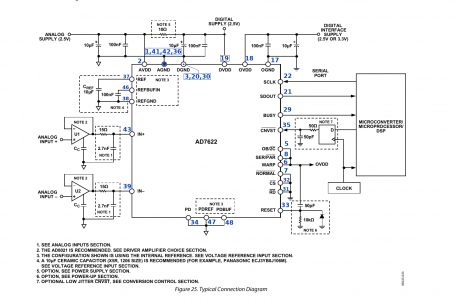

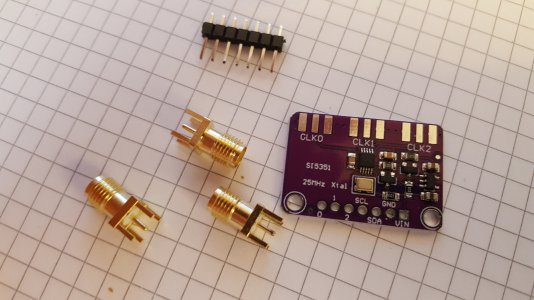

An ADC triggered synchronously via a quality time base, typically a crystal oscillator, with quality clock drivers, usually gives very good results. If a processor triggers it, well that's really not going to work. The data collection can be processor driven, and have some jitter, but not the trigger. Some processors even dither their clock, so they will pass emissions standards, the dither simply introduces noise into the trigger, which in turn injects noise into the sampled data.